The Wrong Questions Cost You the Right Hire: What to Do When Your Screener Does Not Know the Job

Josh Gafni

April 24, 2026

A senior attorney in a midsize firm recently described a familiar scene. The firm needed to hire a junior associate with a specific practice-area background. The HR coordinator ran the screening calls. The coordinator was capable, professional, and diligent. The coordinator was also three practice areas away from the work the new associate would actually do, and it showed. The coordinator asked generic questions, the candidates gave generic answers, and the final handoff to the hiring attorney was a stack of indistinguishable notes. The attorney had to repeat the screening calls himself to figure out which candidate was strong.

This is not an indictment of the coordinator. This is the predictable output of a system in which a non-specialist is asked to evaluate specialist work. The system will fail the same way, with the same frequency, regardless of the coordinator's individual skill. The fix is to change the system.

This post describes four specific fixes. Each fix is practical, each fix is low-cost, and each fix moves the screening process from "the coordinator's best guess" to "a structured output the hiring manager can actually use."

Why Non-Specialist Screening Fails

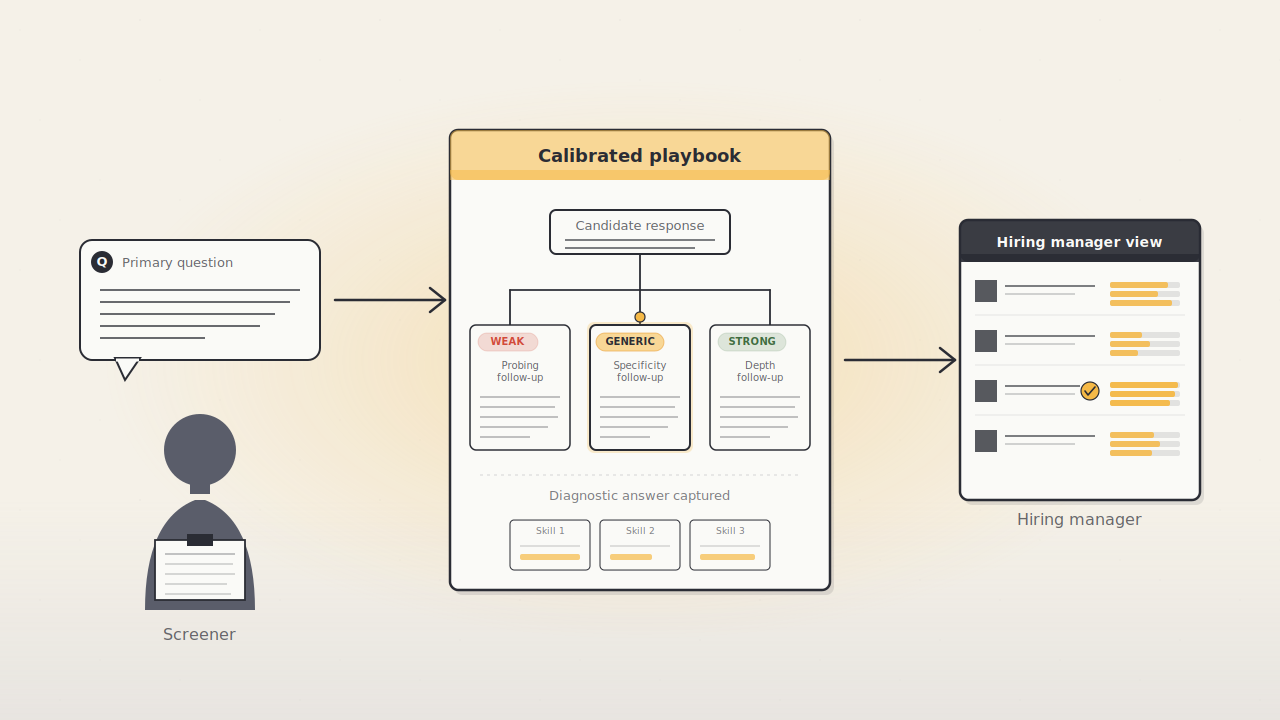

Screening is a pattern-recognition task. The screener hears an answer, compares the answer to a mental library of what good answers sound like for this role, and forms a judgment. A subject-matter expert can do this well. A screener who has never done the work cannot, because the mental library does not exist.

When the library is missing, three failures follow.

The screener asks questions that do not test the underlying skill. The questions are generic, the answers sound equivalent, and the distinguishing signal is buried.

The screener cannot follow up. A weak answer and a strong answer, to a non-specialist, can sound similar on the first pass. A specialist would ask the second question that reveals the gap. A non-specialist moves on.

The screener cannot translate answers for the hiring manager. The notes become descriptive rather than diagnostic: "seemed confident," "explained background clearly." The hiring manager gets vibes instead of evidence.

The workaround most firms default to is "have the hiring manager do the screens." That workaround is expensive, it bottlenecks the role, and it defeats the purpose of delegation. A better workaround is a system that gets the right signal without requiring the screener to be a specialist at all.

Fix One: Replace the Phone Screen With a Role-Specific Challenge

The phone screen is the wrong instrument for the job. A non-specialist running a phone screen will produce a non-specialist's notes, every time. The fix is not to coach the screener into asking better questions in real time. The fix is to replace the live conversation with a structured artifact the candidate produces on their own, before any human time is spent.

The mechanism looks like this. The hiring manager defines, up front, a real business problem the candidate would actually face in the role. Not a hypothetical, not a behavioral question, an actual problem. Each candidate is asked to walk through how they would approach it in a short response, usually a one or two minute video. Every candidate sees the same challenge. Every candidate gets the same opportunity to think it through. Every response lands in the same place, ready to compare side by side.

The hiring manager has now done the specialist work once, in defining the challenge, and the specialist work is reused on every candidate. The screener is no longer in the position of trying to extract signal from a generic conversation. The signal is already in the response, captured directly, ready for the hiring manager to evaluate.

The setup takes the hiring manager about an hour per role. The challenge can be reused for future hires into the same role, which means the investment compounds.

Fix Two: Standardized Answer Capture

When every candidate responds to the same challenge, the hiring manager can compare candidates on the same axis. Noise drops. Signal rises. A direct comparison becomes possible. Structured interviews, in which every candidate answers the same questions scored against the same rubric, have been shown in meta-analytic research to predict job performance more reliably than unstructured interviews (Schmidt and Hunter).

Standardized capture can take several forms. A written response to a structured prompt. A recorded video response to a role-specific challenge. A short work sample scored against a rubric. Each form has trade-offs. Video response is usually the best fit for professional-services hiring because the manager can evaluate the candidate's communication skill, reasoning, and presence at the same time as the substance of the answer.

The discipline that matters is consistency. Every candidate responds to the same challenge. Every candidate gets the same time allowance. Every response is reviewed against the same rubric. The hiring manager can then say, credibly, that the advancement decisions were made on comparable evidence.

A secondary benefit: standardized capture protects the firm in a disputed hiring decision. When every candidate responded to the same challenge and every response is preserved, the firm can show its work.

Fix Three: Video Review Beats Live Re-Interview

The traditional solution to a weak screen is a second interview, live, with the hiring manager. That solution takes 30 to 60 minutes per candidate. Four candidates advanced means two to four hours of calendar time for the hiring manager.

Video review is faster. A two-minute video response is a two-minute review. The hiring manager can scrub to the moment that matters. The hiring manager can skip the small talk and the logistical warm-up. The hiring manager can review four candidates in the time a single live interview would have consumed.

The format also reveals different information. A live interview is a conversation, which means the candidate's answer is shaped by the interviewer's body language and follow-ups. A recorded response is a monologue, which means the candidate's unaided reasoning is visible. Both formats have value. For a second-level screen, the recorded response usually wins on efficiency and objectivity.

A hiring manager who learns to review video responses at speed recovers real hours per week. Those hours are the scarcest resource in any hiring process. Giving them back to the manager is a meaningful return.

Fix Four: Documentation That Protects the Firm

Every challenge issued, every response received, every advancement or rejection decision, and the reason for each decision should be recorded. Not because the firm expects a dispute. Because the discipline of documenting forces the team to articulate the decision criteria, which tends to produce better decisions.

The documentation does three jobs at once.

Job one, better decisions. A hiring manager who has to write down the reason for a rejection writes better reasons. Sloppy judgments dry up under the light of having to explain them.

Job two, better onboarding of future hires. The documentation of what "good" looked like in the finalists becomes the reference material for defining the role, calibrating future challenges, and training the next reviewer.

Job three, legal defensibility. If the advancement decisions are ever questioned, the firm has a record that shows the process was structured, the criteria were consistent, and the reasons were specific. California's new hiring regulations require four-year record retention for automated-decision systems, up from the prior two-year baseline (Jackson Lewis). The same retention period is a sensible practice for manual screening decisions even outside California.

What This Looks Like In Practice

A boutique accounting firm we spoke with redesigned its junior-hire screening process along these lines. The managing partner defined a real client scenario as the role challenge, wrote a short rubric for scoring responses, and set up the response page. Every candidate recorded a short video response. The partner reviewed the videos against the rubric. The total screening time per candidate dropped from roughly 45 minutes to roughly 8 minutes of partner time. The quality of advancement decisions improved. Two hires in, the firm reported that the calibrated challenge was producing noticeably better matches than the previous process.

The partner's review time, cumulatively across four candidates, was about 32 minutes. The previous live-interview approach would have taken three to four hours. The time saved is meaningful, the decision quality is higher, and the firm has a reusable challenge for the next hire.

The Role of McCoy

McCoy IQ was built around this specific workflow. A hiring team starts with a job description, McCoy IQ helps draft a role-specific challenge in minutes, candidates respond with a short video, and every response lands in one place to scan and compare. The output is a set of comparable, documented, reviewable candidate evaluations. The tool does the structuring work that most firms do not have the bandwidth to build internally.

You do not need McCoy IQ to apply the four fixes in this post. The logic holds for any structured screening process, delivered on any platform. We built McCoy IQ because most firms are too stretched to design the process themselves, and a prebuilt layer is faster than a custom build.

Three Quick Questions

What is the biggest mistake non-specialist screeners make? Asking generic questions that do not test the underlying skill, then producing notes the hiring manager can't act on. The fix is to take the screen out of the screener's hands entirely and replace it with a role-specific challenge every candidate responds to.

Is video review actually faster than a live interview? Yes. A two-minute video is a two-minute review, and the hiring manager can skip the small talk and scrub to the moment that matters. Four candidates can be reviewed in the time a single live interview would have consumed.

Do I really need to document every advancement decision? Yes. Documentation produces better decisions, better training material for future hires, and legal defensibility if a decision is ever questioned.

McCoy IQ replaces phone screens with a role-specific challenge so non-specialists can produce specialist-quality output. Learn more at McCoyIQ.com.

Works Cited

Jackson Lewis P.C. "California's New AI Regulations Take Effect Oct. 1: Here's Your Compliance Checklist." Jackson Lewis, jacksonlewis.com/insights/californias-new-ai-regulations-take-effect-oct-1-heres-your-compliance-checklist.

Schmidt, Frank L., and John E. Hunter. "The Validity and Utility of Selection Methods in Personnel Psychology: Practical and Theoretical Implications of 85 Years of Research Findings." Psychological Bulletin, vol. 124, no. 2, 1998, pp. 262-274.